OpenNovelty: An LLM-powered Agentic System for Verifiable Scholarly Novelty Assessment

Published in arXiv preprint, 2026

OpenNovelty focuses on a difficult but essential part of research evaluation: verifiable novelty assessment with explicit evidence traces.

Why this system matters

Novelty review is usually time-constrained, inconsistent across reviewers, and highly dependent on retrieval coverage. OpenNovelty reframes this as a reproducible pipeline problem instead of a one-shot LLM judgment.

Four-phase pipeline

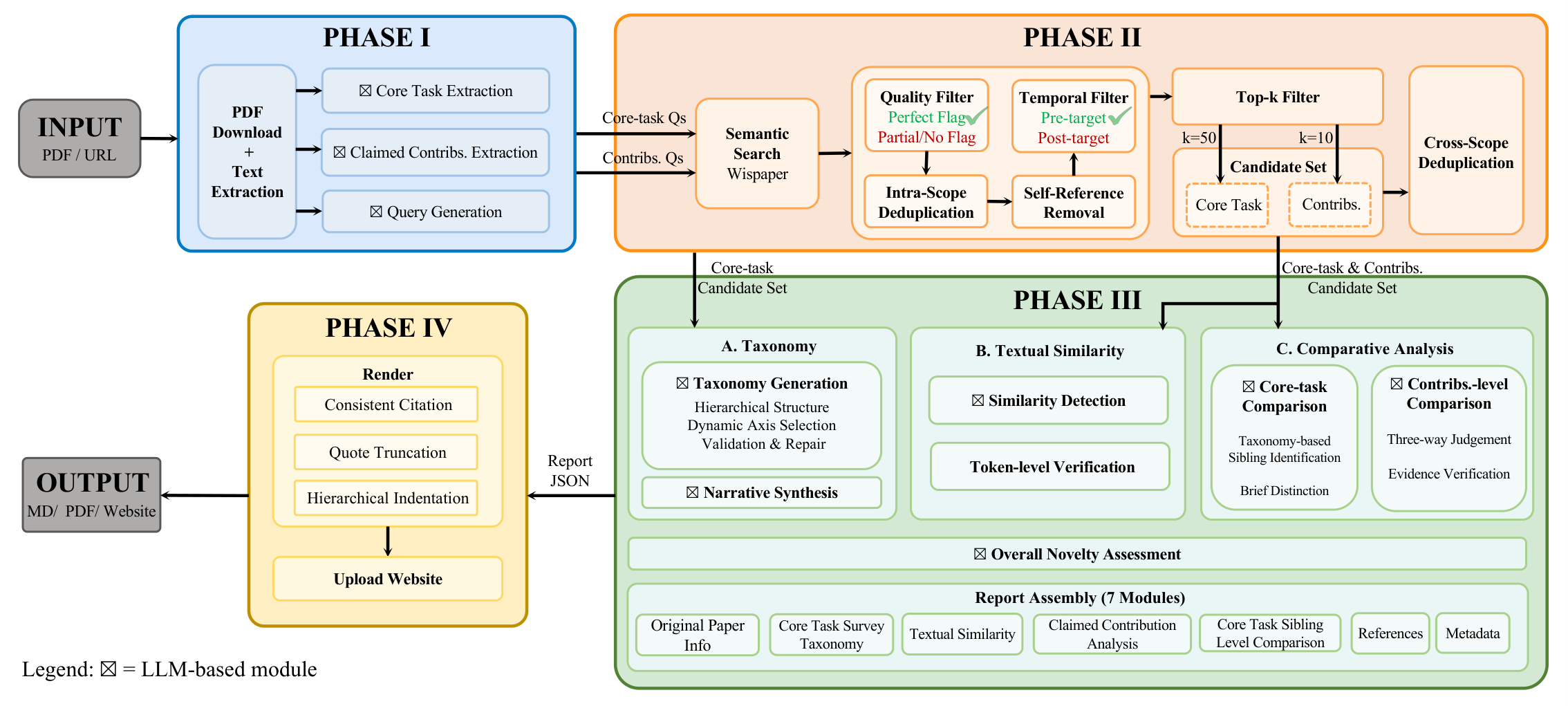

The public repository describes a staged workflow:

- Phase I — Information Extraction

Extract paper text, core task, and contribution claims. - Phase II — Literature Retrieval

Retrieve related work candidates and build citation indices. - Phase III — Deep Analysis

Compare claims with retrieved literature and classify novelty evidence. - Phase IV — Report Generation

Export structured novelty reports (Markdown/PDF) with citations and snippets.

Output artifacts

Typical outputs include:

phase1_extracted.jsoncitation_index.jsonphase3_complete_report.json- final novelty report (

.md/.pdf)

This makes the full process auditable, with intermediate artifacts available for debugging and review.

Quick-start workflow

The repository provides script entrypoints for each phase:

# Phase 1

python scripts/run_phase1_batch.py --papers "<paper-url>" --out-root output/demo --force-year 2026

# Phase 2

bash scripts/run_phase2_concurrent.sh <paper_id> --base-dir output/demo

# Phase 3

bash scripts/run_phase3_all.sh output/demo/<paper_id>

# Phase 4

bash scripts/run_phase4.sh output/demo/<paper_id>

Engineering notes from the repo

- Python 3.8+ environment

- modular scripts for batch and single-paper workflows

- retrieval, analysis, and rendering decoupled by intermediate JSON artifacts

- some external service dependencies are marked as evolving in the current release

Practical takeaway

OpenNovelty is useful when you want traceable novelty review, especially for internal pre-review, large-scale triage, or evidence-grounded reviewer assistance where “why this is novel (or not)” must be inspectable.

Citation

@article{abs-2601-01576,

author = {Ming Zhang and

Kexin Tan and

Yueyuan Huang and

Yujiong Shen and

Chunchun Ma and

Li Ju and

Xinran Zhang and

Yuhui Wang and

Wenqing Jing and

Jingyi Deng and

Huayu Sha and

Binze Hu and

Jingqi Tong and

Changhao Jiang and

Yage Geng and

Yuankai Ying and

Yue Zhang and

Zhangyue Yin and

Zhiheng Xi and

Shihan Dou and

Tao Gui and

Qi Zhang and

Xuanjing Huang},

title = {OpenNovelty: An LLM-powered Agentic System for Verifiable Scholarly

Novelty Assessment},

journal = {CoRR},

volume = {abs/2601.01576},

year = {2026},

url = {https://doi.org/10.48550/arXiv.2601.01576},

doi = {10.48550/ARXIV.2601.01576},

eprinttype = {arXiv},

eprint = {2601.01576},

biburl = {https://dblp.org/rec/journals/corr/abs-2601-01576.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}